PersistWorld

Project Page | GitHub | Paper (ArXiv)

Authors: Jai Bardhan, Patrik Drozdík, Josef Šivic, Vladimír Petrík

Affiliation: Czech Institute of Informatics, Robotics and Cybernetics (CIIRC), Czech Technical University in Prague

Overview

PersistWorld stabilizes long-horizon robot world model rollouts via RL post-training on autoregressive outputs.

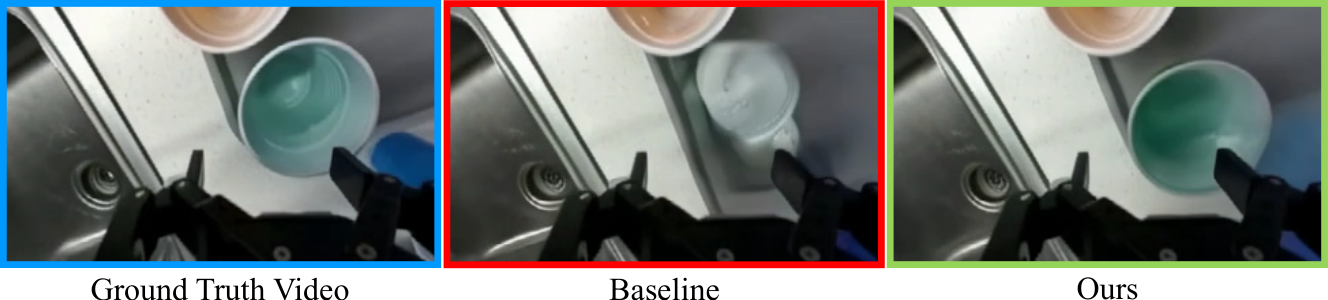

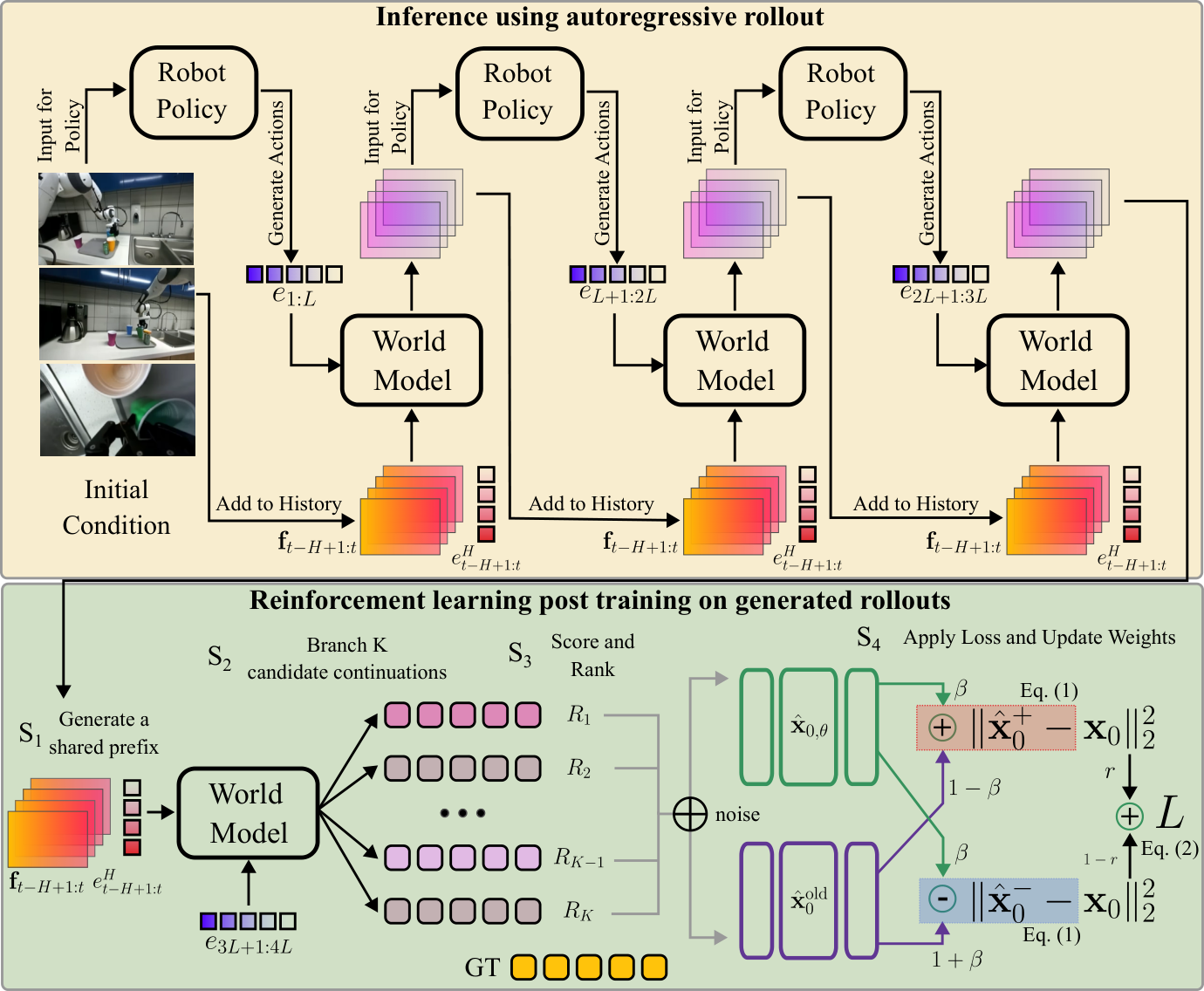

Action-conditioned robot world models generate future video frames given a robot action sequence, but break down during long-horizon autoregressive deployment: each predicted clip feeds back as context for the next, causing errors to compound and visual quality to rapidly degrade — a phenomenon known as the closed-loop gap. We present PersistWorld, an RL post-training framework that trains the world model directly on its own autoregressive rollouts, using contrastive denoising with multi-view perceptual rewards.

Method

PersistWorld addresses the closed-loop gap via online RL post-training. Rather than training on ground-truth history, we run the model autoregressively on its own outputs, branch K=16 candidate continuations from a shared rollout history, rank them with multi-view perceptual rewards (LPIPS, SSIM, PSNR), and update lightweight LoRA adapters and the action encoder using a contrastive denoising objective.

Results

PersistWorld establishes a new state-of-the-art on the DROID dataset over 14-step autoregressive rollouts (≈11 s):

| Cameras | Model | SSIM ↑ | PSNR ↑ | LPIPS ↓ |

|---|---|---|---|---|

| External | WPE | 0.77 | 20.33 | 0.131 |

| External | IRASim | 0.77 | 21.36 | 0.117 |

| External | Ctrl-World (paper) | 0.83 | 23.56 | 0.091 |

| External | Ctrl-World (repro) | 0.84 | 23.02 | 0.081 |

| External | PersistWorld (Ours) | 0.86 | 24.42 | 0.070 |

| Wrist | Ctrl-World (repro) | 0.62 | 17.80 | 0.310 |

| Wrist | PersistWorld (Ours) | 0.67 | 19.39 | 0.277 |

- LPIPS reduced by 14% on external cameras

- SSIM improved by 9.1% on the wrist camera

- Wins ~98% of paired comparisons ($p < 10^{-6}$)

- 80% preference rate in a blind human study (n=200)

Model Details

This checkpoint is a fine-tuned version of Ctrl-World, which is itself built on top of Stable Video Diffusion (SVD).

The weights file contains the merged UNet + action adapter weights. At inference time, the SVD pipeline is loaded from the HuggingFace Hub and this checkpoint is applied on top.

Training data: DROID robot manipulation dataset.

Usage

git clone https://github.com/Jai2500/PersistWorld

cd PersistWorld

pip install -r requirements.txt

# Download checkpoint

huggingface-cli download jaibrdhn/persistworld checkpoint-5760-merged.pt \

--local-dir model_ckpt/

# Run rollout

PYTHONPATH=. python scripts/rollout_replay_traj.py \

--dataset_root_path dataset_example \

--dataset_meta_info_path dataset_meta_info \

--dataset_names droid_subset \

--ckpt_path model_ckpt/checkpoint-5760-merged.pt

See the GitHub repository for full installation and training instructions.

Citation

@inproceedings{bardhan2026persistworld,

title = {PersistWorld: Stabilizing Multi-step Robot World Model Rollouts

via Reinforcement Learning},

author = {Bardhan, Jai and Drozd\'{i}k, Patrik and \v{S}ivic, Josef

and Petr\'{i}k, Vladim\'{i}r},

booktitle = {ArXiv Preprint},

year = {2026}

}

Model tree for jaibrdhn/persistworld

Base model

stabilityai/stable-video-diffusion-img2vid