AI & ML interests

In the following you find models tuned to be used for sentence / text embedding generation. They can be used with the sentence-transformers package.

Recent Activity

SentenceTransformers 🤗 is a Python framework for using and training state-of-the-art embedding and reranker models. It can be used to compute embeddings from text, images, audio, or video using Sentence Transformer models (quickstart), to calculate similarity scores using Cross-Encoder (a.k.a. reranker) models (quickstart), or to generate sparse embeddings using Sparse Encoder models (quickstart).

Install the Sentence Transformers library.

pip install -U sentence-transformers

The usage is as simple as:

from sentence_transformers import SentenceTransformer

# 1. Load a pretrained Sentence Transformer model

model = SentenceTransformer("sentence-transformers/all-MiniLM-L6-v2")

# The sentences to encode

sentences = [

"The weather is lovely today.",

"It's so sunny outside!",

"He drove to the stadium.",

]

# 2. Calculate embeddings by calling model.encode()

embeddings = model.encode(sentences)

print(embeddings.shape)

# [3, 384]

# 3. Calculate the embedding similarities

similarities = model.similarity(embeddings, embeddings)

print(similarities)

# tensor([[1.0000, 0.6660, 0.1046],

# [0.6660, 1.0000, 0.1411],

# [0.1046, 0.1411, 1.0000]])

Hugging Face makes it easy to collaboratively build and showcase your Sentence Transformers models! You can collaborate with your organization, upload and showcase your own models in your profile ❤️

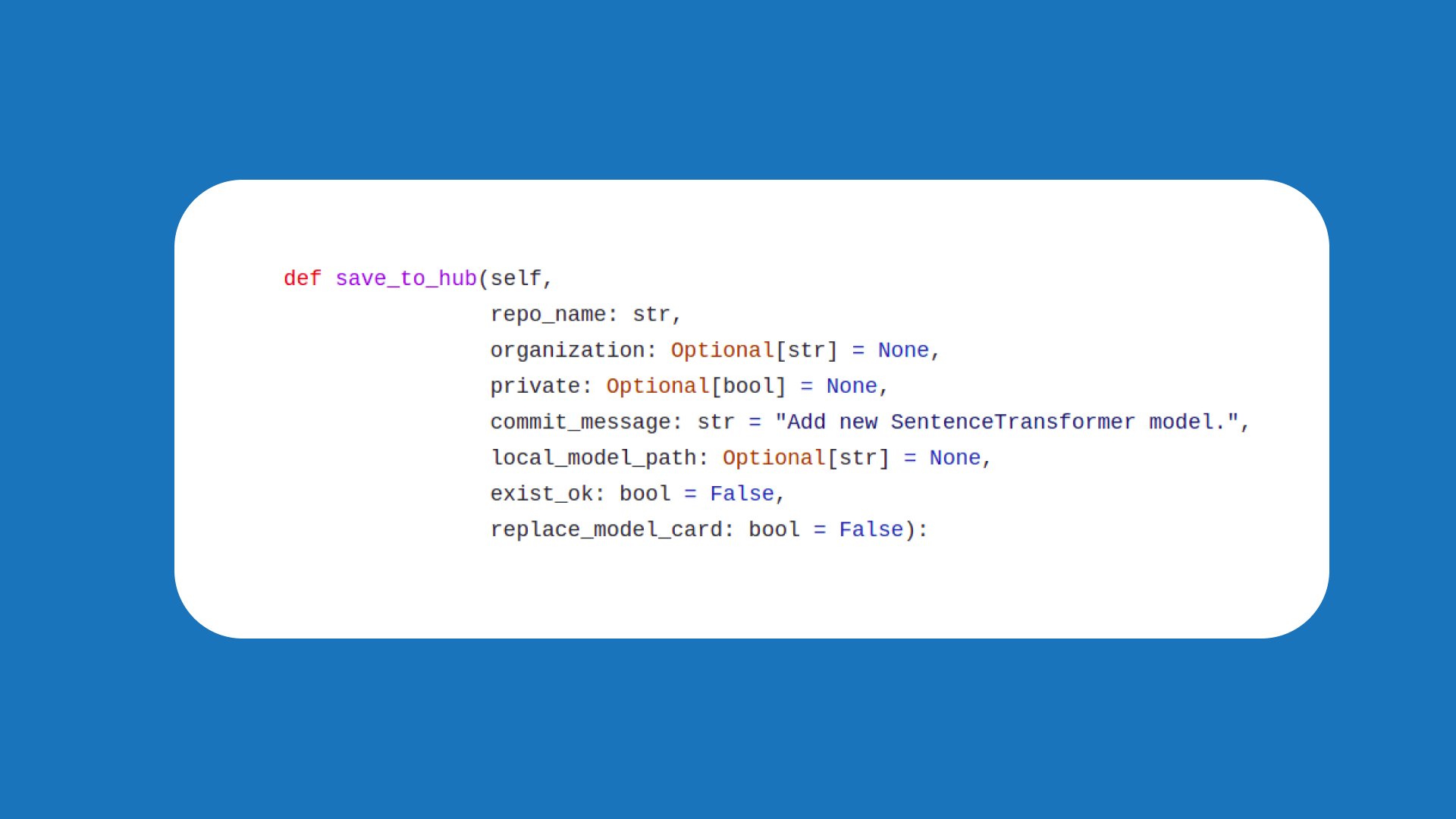

To upload your Sentence Transformers models to the Hugging Face Hub, log in with huggingface-cli login and use the push_to_hub method within the Sentence Transformers library.

from sentence_transformers import SentenceTransformer

# Load or train a model

model = SentenceTransformer(...)

# Push to Hub

model.push_to_hub("my_new_model")

Learn more

Training guides:

- Training and Finetuning Embedding Models with Sentence Transformers: end-to-end training of bi-encoder embedding models.

- Training and Finetuning Reranker Models with Sentence Transformers: training Cross Encoder models for the second stage of retrieve-and-rerank pipelines.

- Training and Finetuning Sparse Embedding Models with Sentence Transformers: training SPLADE and other sparse encoders.

Multimodal:

- Multimodal Embedding & Reranker Models with Sentence Transformers: using text, image, audio, and video models through a single API.

- Training and Finetuning Multimodal Embedding & Reranker Models with Sentence Transformers: training multimodal models, with a Visual Document Retrieval walkthrough.

Efficiency techniques:

- 🪆 Introduction to Matryoshka Embedding Models: variable-size embeddings that can be truncated with minimal quality loss.

- Train 400x faster Static Embedding Models with Sentence Transformers: CPU-friendly embedding models without attention.

- Binary and Scalar Embedding Quantization for Significantly Faster & Cheaper Retrieval: post-training compression of embedding vectors.